(This essay was originally published by the Newport Daily News (RI) on August 9, 2021.)

With COVID in retreat, I recently took a three-day trip to our nation’s capital with 12-year-old grandson, Alex, to show him the sights and teach him some history. It proved to be a great tonic for not only rejuvenating one’s patriotism but also one’s hope in America’s future.

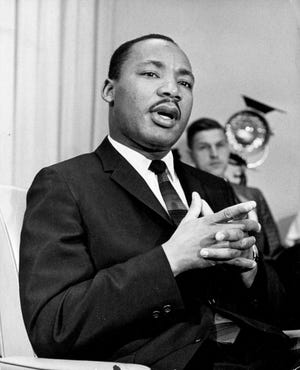

After visiting the Tomb of the Unknowns on the first afternoon, on the second day with the heat wave persisting, we remained undeterred in our aim to visit the main memorials on the National Mall: Vietnam War, Lincoln, Korean War (under renovation), Martin Luther King Jr., FDR, and Thomas Jefferson—all magnificent and moving.

At the Lincoln Memorial, as we were standing before the Gettysburg Address, I heard a man reciting the words. I turned to see a darker-skinned Latino American father reciting the Address to his young son as the mother looked on. This underscored to me the central role that American ideas—the American Creed—must play in any American Reconciliation, ideas rather than race, religion, sex, gender, or country of origin. The New American has no particular skin color.

Alex and I stayed at a hotel in mid-town, near McPherson Square and Farragut Square. Within these urban parks we saw the homeless; regrettably virtually all were darker-skinned Americans.

Ironically, a few blocks from these squares, directly outside our hotel on 16th Street, NW, was the newly established Black Lives Matter Plaza. These words, broad and bold, are painted on the pavement of a two-block section, yellow letters on a black background.

The evening after our return, I found myself longing for ice cream from Frosty-Freez, so off I went with my wife and granddaughter to engage in that great Aquidneck Island summer tradition. At probably 80 feet long, the waiting line was for me a new record. However, for those of you who have tasted its delicious delights, you know that the serving staff is ample and efficient. And so I waited my turn.

As I waited on the long line, a lighter-skinned American young man and a darker-skinned American young woman drove in and parked. Upon seeing the line, they laughed, nodded to each other, and departed.

After securing our ice cream treats, as I walked to the car, a teenage couple—a darker skinned American girl with Asian features and a lighter-skinned American boy—passed me as they headed for the line.

With the fresh patriotism and hope generated from my trip to the nation’s capital, I was now further fortified in seeing these mixed-raced, young couples. Getting to know each other across lines which separate us and perhaps falling in love is a critical requirement for the future of our divided country. It is something that can be fostered at all levels: at the federal and state levels by national and state service programs; at the community level by more town parades and celebrations, joint church and community activities, and school dances; and at the family level by more family dinners free of technology and prejudice.

My experience at Frosty Freez reminded me of the hope I feel when I see my lighter-skinned American nephew with his darker-skinned American wife, but especially the hope I feel when I see their two children. These are the color of the new, “race-less” Americans who can lead the way to an American Reconciliation. These New American grandchildren will surely change the hardened attitudes of their grandparents. As a grandfather, I can say categorically that it is very difficult to hate your grandchildren.

Mixed-raced marriages, such as my nephew’s, have continued to rise over the past 50 years. In 1967, when the Supreme Court ruled that miscegenation laws were unconstitutional, only 3% of all newlyweds were married to someone of another race or ethnicity, according to Pew Research. By 2015, it had risen to 17%. In 2015, 10% of all married people were in a mixed-race/ethnicity marriage.

Further grounds for hope may be found in the large majority of Americans who now approve of interracial marriage. A YouGov/Economist survey in 2018 found that only 17% of Americans oppose interracial marriage, a vast decrease from the 1950s.

Observing the younger generations—my grandchildren and their friends and also my undergrad students at Salve Regina University—also gives me hope. They seem less captive of prejudice and intolerance than my generation of early baby boomers and even Gen X.

In a recent family game, the game card asked: “If you could do one thing to help fix the world, what would it be?” I was pleasantly surprised to hear both grandchildren, playing the game, say “end racism.”

Grandfather of eight, Fred Zilian (zilianblog.com; Twitter: @FredZilian) is an adjunct professor of history and politics at Salve Regina University and a regular columnist.